Software is on track to undergo substantial change. As traditional software evolves into AI-powered software, it brings developers better speed, more power, and a new generation of tools and interfaces that couldn’t exist before, like AI-powered UI, multi-agent systems, or infrastructure for executing AI-generated tasks.

Spending some time in San Francisco working on cloud runtime for AI agents, and talking to developers, founders, and AI engineers, I am writing about the differences between LLM-powered software and traditional software and the challenges it brings.

The LLM-powered tech stack

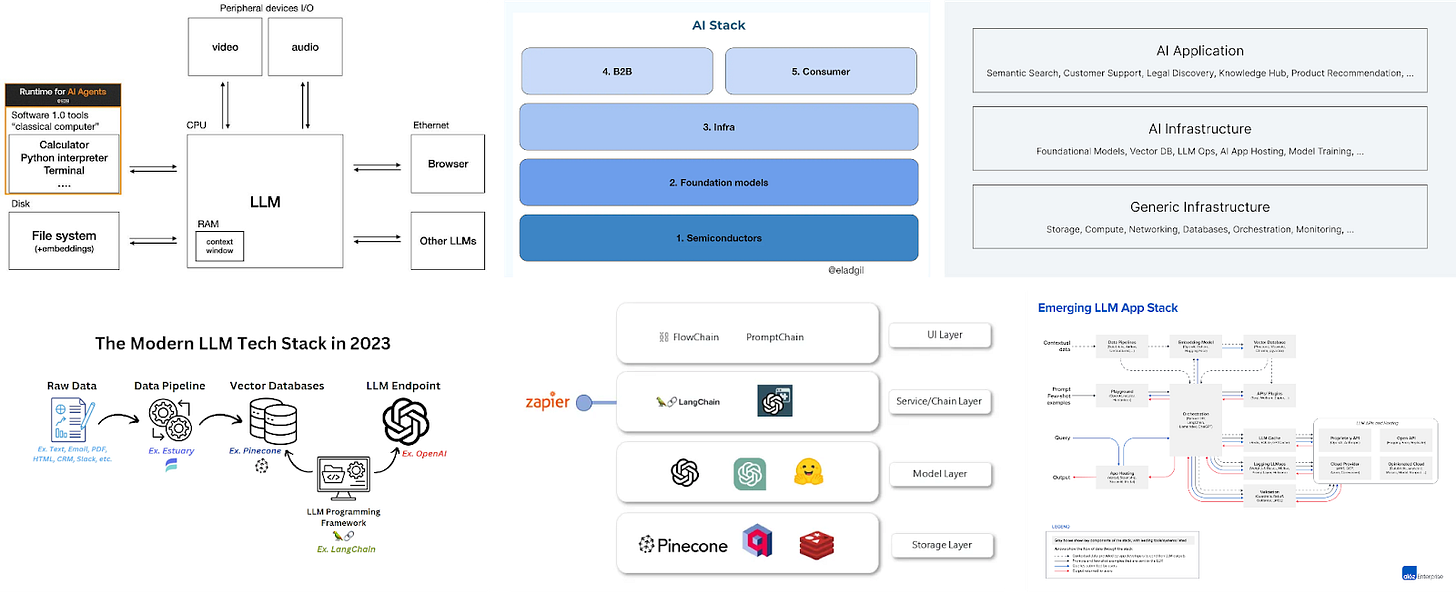

We all have seen probably at least a few diagrams mapping the LLM landscape and LLM-powered tech stack …

Just to mention a few:

Image 1: The “LLMs are new kernel” diagram by Andrej Karpathy is probably already a part of the OG knowledge in Silicon Valley. Here, it is updated with runtime for agents.

Image 2: This simplification of AI stack by Elad Gil, the author of High Growth Handbook, who himself enhances the number of questions that keep arising around AI.

Image 3: Yet another categorization by a team of founders or CEOs of Pinecone, Vercel, AI21Labs, Anyscale, and LangChain.

… So what are the key components to focus on? Within this article, I will go through the following categorization:

Operating system

Applications

Frontend

Backend

Devops tools

Testing tools

Hardware

My intention is not to have mutually exclusive, collectively exhaustive categories, but rather to provide an overview of what interesting elements make the LLM-powered software, from the view of someone in the team building one puzzle piece in the ecosystem.

Elements of the LLM-powered software:

1. Operating system

Initially, LLMs were thought to be a new search tool, similar to Perplexity or Phind. However, it is incorrect to view LLMs only as chatbots, just as it was wrong to view early computers as mere calculators.

LLMs as the new kernel

LLMs generate output in the form of text, audio, or visual but also code output, which powers the new code interpreters within AI apps. They are the foundational building blocks in the new AI software, and Andrej Karpathy has even made the parallel between LLMs and the kernel of the operating system.

This evolvement implies no more hard-coded rules, but systems and apps that are 100% personalized to each user and where code can potentially change on the fly. If an LLM is the new kernel then services like a sandbox environment can be the new cloud runtime for LLM-powered applications.

2. Applications

Advancements in computing power and neural networks in the 2010s led to fast development in the AI field. AI was frequently used for optimizing ads and recommendations, followed by machine learning tasks like image classification. In the last few years (or months), the “next big thing” is LLM-powered (semi-)autonomous agents and applications built around them.

AI Agents

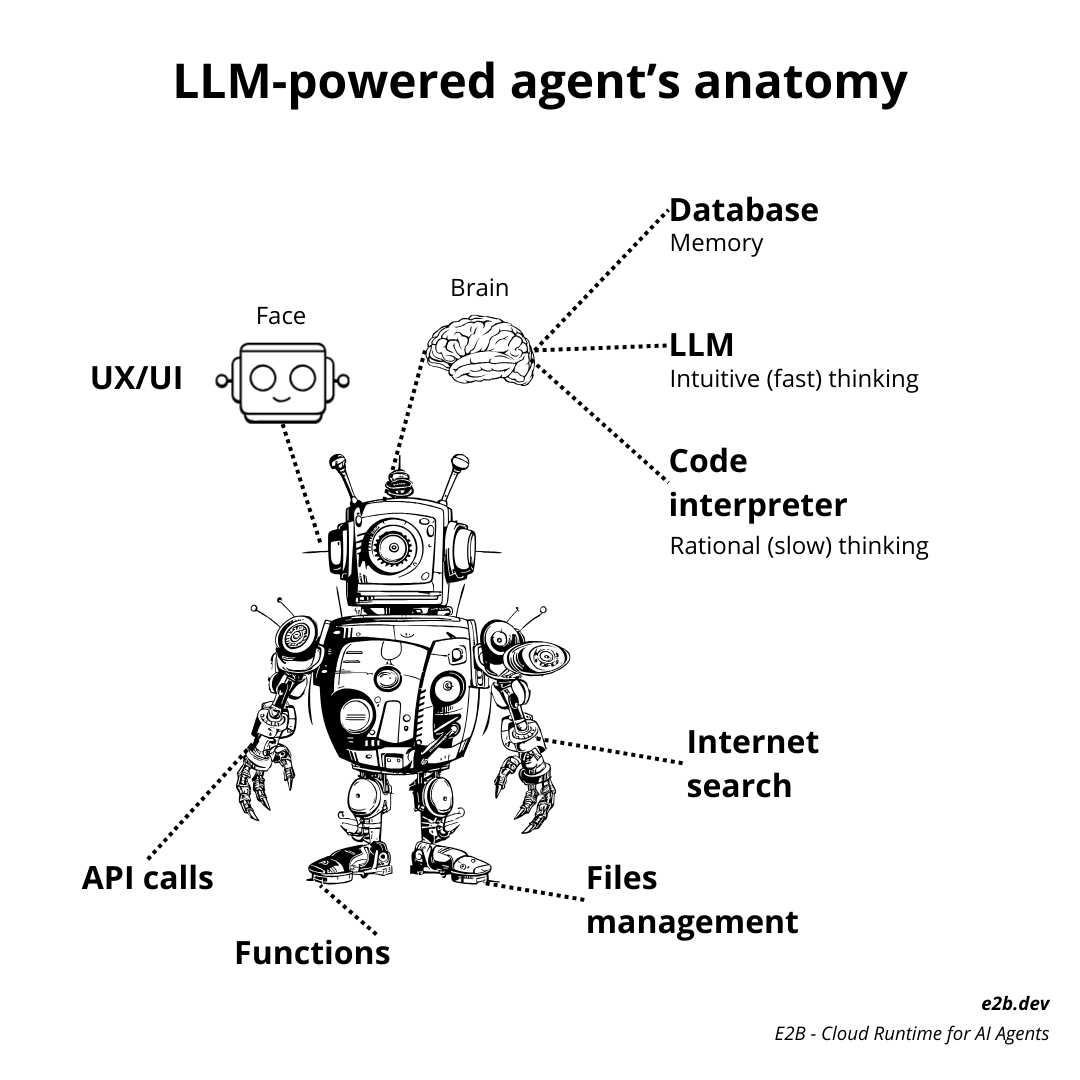

Agents are LLM-powered assistants that are characterized by:

Leveraging both short-term and long-term memory

Tool usage (e.g., accessing the internet and apps via API calls, defining new functions to use, connecting to users’ data, and managing files).

Performing more complex multi-step reasoning (as opposed to simpler AI assistants).

Given the stochasticity of LLMs, the third characteristic especially requires a new type of robust engine powering the “brain” of the agent as a complement to an LLM.

LLM-powered Code Interpreters

An essential component of reliable AI agents is a code interpreter, which enables them to complete more complex tasks that often require executing code or following multi-step plans. Without a code interpreter, an agent has to rely only on LLM, which is non-deterministic by nature and might hallucinate or avoid more complicated tasks.

Here are some examples of tasks that an AI agent with a code interpreter can perform significantly better, according to a test:

Simulate 100 games of poker and tell me the outcomes.

Write the Guess the Number game and write a test for it, make sure all tests pass.

Analyze NVIDIA stock and visualize its development until 2030.

Play a Blackjack game with me.

In a sense, code interpreters can be perceived as something that makes LLMs more precise and powerful. Examples of popular AI apps equipped with a code interpreter are AutoGen by Microsoft or Open Interpreter.

3. Frontend

We as humans have advanced from being mere programmers to becoming managers of an AI agent or a team of agents. However, we still need to address the challenge of how humans can supervise and correct the decisions made by AI agents. This may involve creating a “code editor 2.0” and a whole UI that is native to LLMs.

AI-native UI

There is a shift towards the AI-native web where humans play a more active role in the working loop than being mere observers of AI processes. A nice example is v0’s Generative UI - a frontend tool that converts text and image prompts to React UIs.

Another example is from Gumloop, a LLM-powered automation tool for enterprise workflows.

“I see generative UI as a problem of execution and user experience problem more than a technical problem,” says MaxMax Brodeur-Urbas, the founder.

Jakub Jurovych, the founder of Deepnote (an AI-powered data workspace), thinks of AI as a new “grammar” in UI design.

“It doesn’t happen often that a new piece of grammar is introduced. Definitely not at this scale. It happened in the 1960s with the introduction of the mouse, leading to GUIs as we know them today (windows, icons, links). Then it happened again with the release of the iPhone, leading to new UIs optimized for mobile devices. And now it’s going to happen again with the AI/LLMs as the trigger. We are entering the era of AIUI.”

4. Backend

Infrastructure

I will purposely skip the infrastructure part of the AI tech stack, which I perceive as a topic difficult to cover in a few paragraphs.

Frameworks and languages

Agentic frameworks like LangChain are still widely used because they allow a quick start with the technology. There is however a tradeoff between simplicity of use and the degree of freedom for customization. An example of this is comparing AutoGen and CrewAI, both popular multi-agent frameworks. The latter is built on top of LangChain and many developers chose it because they were already familiar with LangChain, but others argued that AutoGen offers more flexibility for example for precise control over the way agents process information, access external APIs, and leverage custom scripting.

Another potential danger of frameworks is vendor lock-in. Companies that already paid for a certain part of technology will not be likely to switch to something else, so it is good to build with a tech stack that is agnostic.

Therefore it might be a better investment to build an AI app with simple Python or JavaScript/Typescript. Speaking of which, there are ongoing debates about the most optimal language for building AI applications.

Guillermo Rauch, CEO of Vercel , says that “The AI engineer of the future is a TypeScript engineer”.

The trend towards TypeScript is something we (as the E2B team) can confirm - usage of our JS/TS SDK is growing faster than our Python SDK. A similar trend was mentioned in an informal discussion by Langfuse.

Databases and RAG

A vector database is a collection of data stored as mathematical representations. Unlike traditional databases that focus on structured data, vector databases excel at handling unstructured and complex data types, such as images, audio, and text.

RAGs and Vector DBs make your LLM hallucinate less and more precise.

VC firms have invested heavily in Vector Database companies recently. Weaviate closed a $16M Series A round, Chroma raised $18M, and Pinecone raised $28M at a valuation of almost $700M.

“The vector database we chose is Weaviate hosted on Railway,” says Ted Spare, co-founder of RubricLabs and Maige.

5. DevOps Tools

Containerization and Orchestration Tools

In the context of AI, we need tools for managing application processes in containers. Such processes might step from using the mentioned code interpreter type engine and examples can include:

Files management (creating new files, uploading and downloading of files)

Access to internet

Using functions or defining new functions

Execution of code

API calls to applications (e.g., using e-mail or calendar).

Some applications like AutoGen use Docker for running the LLM-generated processes, but there are limitations of running AI apps locally. By its nature, code produced by LLM is untrusted, so its execution should be done in an isolated environment, such as a cloud sandbox.

Cloud 2.0

There are a few major players in the space of cloud providers:

AWS

Google Cloud Platform

Microsoft Azure.

Some developers have been using one of these to try to build in-house cloud runtime for their AI app or agent, but it’s time-consuming or challenging for developers who are not infrastructure engineers.

Therefore, companies like E2B can be perceived as “cloud 2.0” — runtime tailored for AI apps and allowing them to have the underlying code interpreter. It’s exactly the code execution layer that makes the LLM actionable.

6. Testing tools

As was mentioned, AI agents equipped with code interpreters have the ability to self-verify the produced output by executing the produced code. However, that isn’t enough and one might want to compare LLMs with each other or monitor the success of different prompts.

Monitoring and analytics

LLMs generate non-deterministic output by definition, which creates an opportunity for many startups to offer various services for apps built around them. For instance:

AgentOps (observability, evals, and replay analytics)

Autoblocks (A/B test prompts, context, and more, user analytics)

Langfuse (tracing, evaluation, prompt management, and metrics)

ReactEval (evaluation of LLM-generated ReactJS code).

Evaluation frameworks

Benchmarking and evaluation of LLM output has been a big topic as well.

Frontend-specific solutions like AI agents like GitWit and evaluation frameworks for frontend are being built. One of the first LLM benchmarks for frontend is ReactEval which runs hundreds of tests in the browser in isolated sandbox instances.

7. AI-centered hardware

For the complete picture, I mention some recent viral launches of hardware powered by generative AI.

Humane launched a wearable AI Pin in November 2023.

Rabbit launched a portable consumer AI device — R1 in January 2024.

Open Interpreter launched 01 Light — a portable voice assistant that controls your computer and learns new skills in March 2024.

For the conclusion, I’m adding my favorite AI hardware, which is Grimes’ spider powered by Open Interpreter. I think this spider is the product that we all need to adopt in our households and daily life because look how cool it is!